An introduction to technical GEO (AEO)

As in SEO, GEO strategies can be broken down into a few key pillars. Namely:

- Technical GEO

- Content GEO

- Entity GEO

- Brand Authority GEO

Here, we’re focusing on the fundamentals of Technical GEO — that means building a machine-readable foundation for your website and content so AI bots can easily access, understand, evaluate, and choose it for inclusion in their generated responses.

TLDR (Executive Summary):

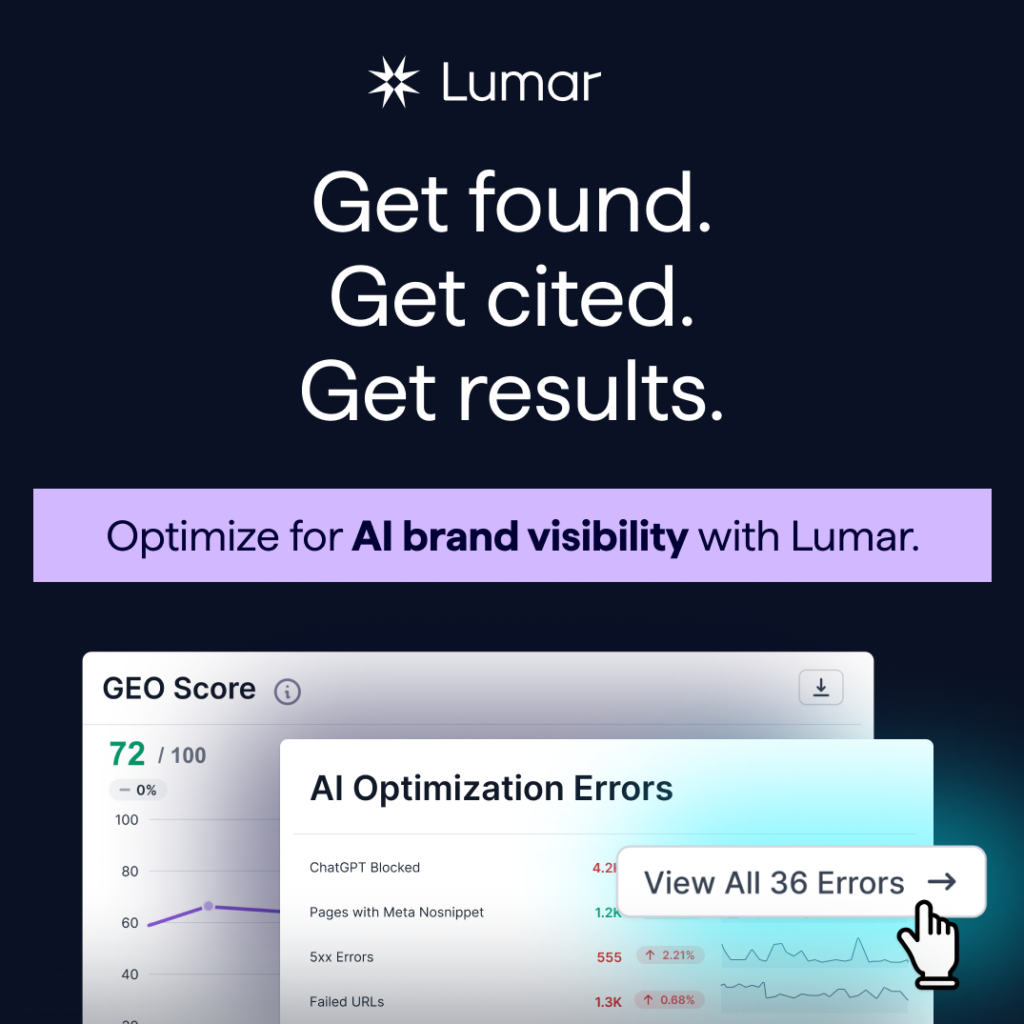

If your website blocks AI crawlers, hides key content behind JavaScript, contains indexability issues, or misuses nosnippet directives, your content may be invisible to AI search systems.

This Technical GEO guide explains the core technical fixes that help improve AI discoverability and citation eligibility across platforms like ChatGPT, Google AI Overviews, Gemini, Copilot, and Perplexity. Learn how crawlability, renderability, structured data, and search indexing all contribute to AI visibility

About Lumar’s GEO / AEO Explainer Series:

In this Lumar series, we’re exploring tactics and strategies for generative engine optimization (GEO), also known as answer engine optimization (AEO) — that is, how to boost your brand’s visibility and likelihood of earning mentions or citations from LLMs and AI-powered platforms like ChatGPT, Claude, Gemini, Perplexity, or Google’s AI overviews and AI mode.

First of all, what IS Technical GEO?

Technical GEO ensures your website (and the content you host on it) is built in a way AI systems can access and process. It ensures your content can be discovered, rendered, and interpreted correctly by AI bots.

This technical layer is the backbone that allows your Content, Entity, and Brand Authority GEO strategies to function further down the funnel; if AI cannot properly access or understand the primary source of information about your brand (your website), chances are, your brand will not be fully or accurately included in AI answers.

Why implement a Technical GEO strategy?

Tech GEO influences multiple aspects of the generative AI processes that takes a user’s prompt from asked to answered, including:

- AI Discoverability: How quickly AI and search engine bots discover new and updated content from your brand’s website.

- Content Freshness: Ensuring the AI always extracts the most current version of your facts and data.

- Citation Eligibility: Whether your content is technically eligible to be included in generated answers (ie, AI bots are not blocked by robots.txt or NoSnippet rules).

- AI Renderability: Whether your pages render correctly when accessed by AI systems — necessary for AI to ‘read’ your content as intended.

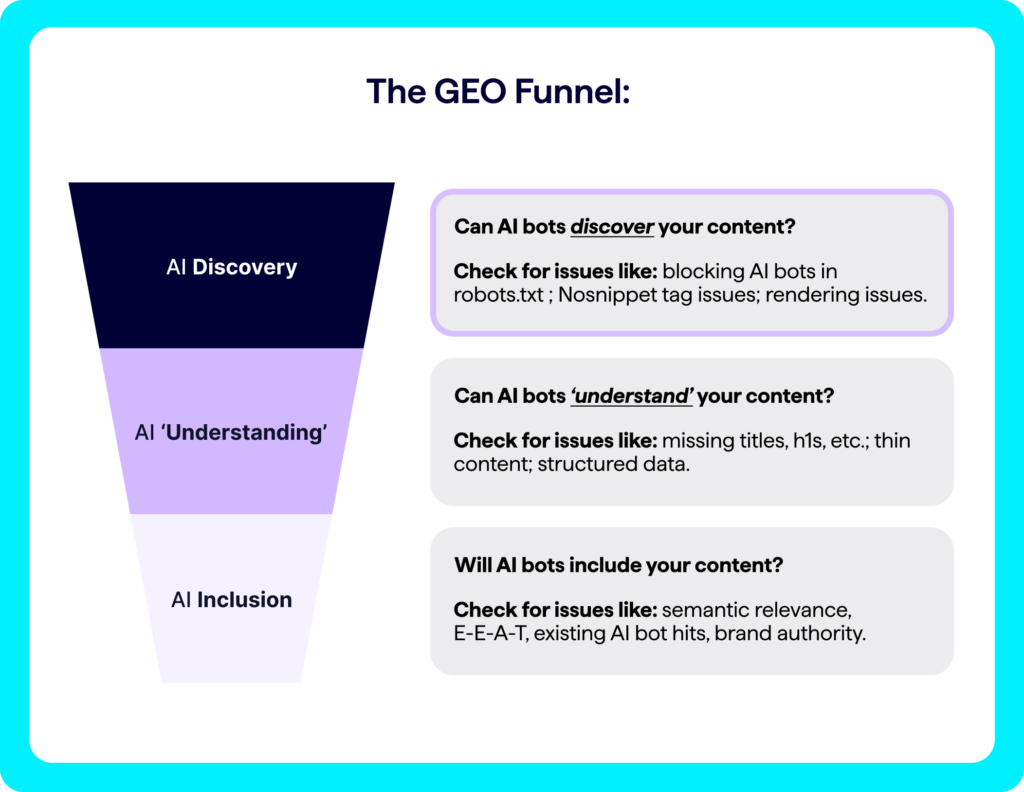

Technical GEO’s place in the AI visibility funnel

The focal point of Technical GEO is AI discoverability — making sure your content is discoverable (& permitted for use) by AI systems.

This aligns with the ‘Discovery’ stage in our GEO Funnel:

On Technical GEO:

“SEOs will need to understand how content is rendered [by AI systems] and ensure it is both crawlable and machine-readable.”

— Natalie Stubbs, Senior Technical SEO at Lumar

Key tips for technical GEO / AEO audits

Before we dive deeper into key tactics for Technical GEO, familiarize yourself with some of the key activities you’ll need to cover as part of your Tech GEO plan (many of these will sound familiar to those who work in Tech SEO!):

Your Tech GEO Checklist:

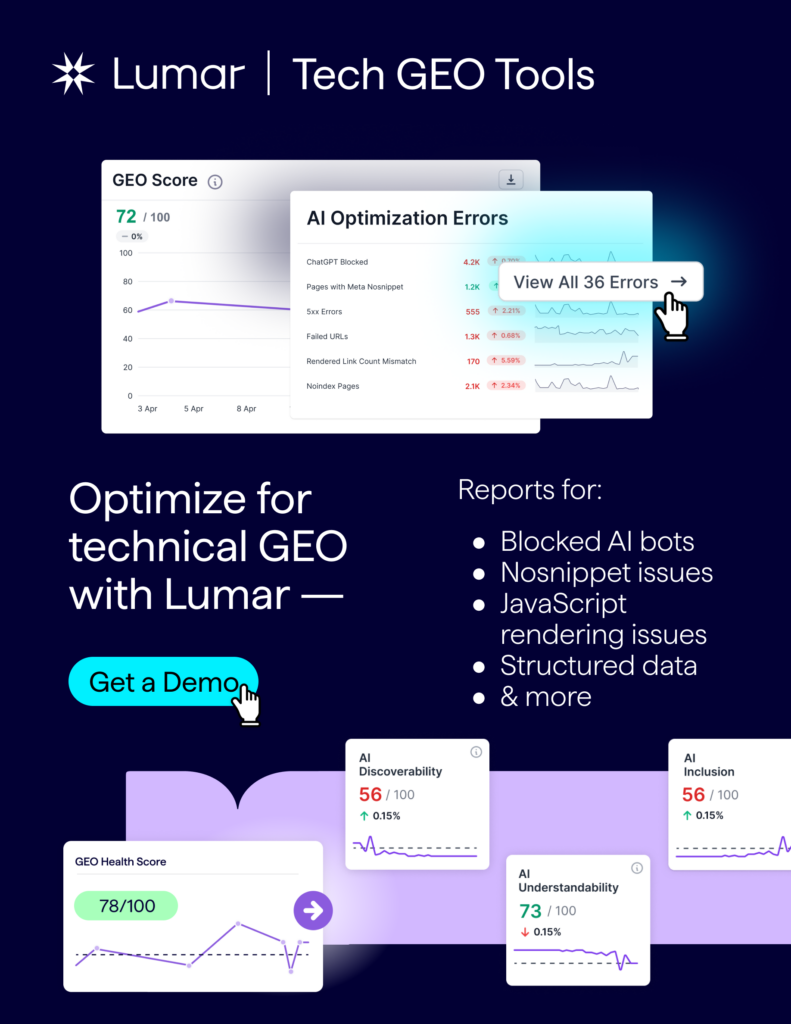

Check you are not blocking AI bots (like GPTBot, PerplexityBot, Google-Extended, BingBot, CCBot) in your website’s robots.txt file.

Ensure robots.txt and nosnippet directives are not preventing AI citation.

Fix 4xx/5xx errors, so AI bots can access pages.

Continue to optimize for indexability, as many AI tools still rely on traditional search engine indices to source the inputs that inform their generative responses.

Implement structured data (schema) to make your website content more machine-readable.

Maintain strong internal linking across topic clusters.

Ensure key content is visible in raw HTML (not only rendered via JavaScript, which AI systems struggle with).

Now, let’s dig into some of these key action-plan items to ensure your Technical GEO is in good shape…

Robots.txt: Are you blocking AI bots?

For GEO, it is fundamental to ensure that AI bots can access your website’s content. If pages on your site are blocking AI bots (e.g., via robots.txt), these systems cannot crawl and process the content, thereby preventing it from appearing in AI-generated summaries and directly hindering your AI search visibility.

If you want to appear in generative AI responses and AI search features, make sure your site isn’t unintentionally blocking important AI crawlers such as:

- GPTBot — If you want your brand or content to be cited in ChatGPT responses, you need to ensure you are not accidentally blocking OpenAI’s crawlers (like GPTBot) on your website.

- PerplexityBot — Perplexity is a major AI search engine. Blocking its crawler (PerplexityBot) from accessing your web pages can prevent your brand’s content from being included in its AI responses.

- Google-Extended — To appear in Google Gemini and other Google AI-related features, you should ensure your site is not blocking their AI-related crawlers (like Google-Extended).

- BingBot or BingPreview — Microsoft’s Bing search engine offers several controls available to limit inclusion in Bing Chat’s generative responses — check that your site is not accidentally set to block inclusion in Bing Chat, if you want your brand to appear there.

- CCBot — Common Crawl is a non-profit organization that continuously crawls the public web and freely provides its massive archives and datasets to the public. The data collected from these crawls is then made available in formats suitable for research and development. — Common Crawl is a foundational source of data for a significant number of AI models, particularly large language models (LLMs). It feeds many AI training datasets. Blocking the Common Crawl bot (CCBot) on your site limits AI models’ training exposure to your brand and content.

Make your content available to AI

Before AI bots can crawl and parse your pages for content that may be relevant to cite in their responses, the page must first be available for the bots to access. To enable your website’s content to be discovered and cited in AI-generated answers, make sure none of your target pages return availability errors such as client-side 4xx errors (like 404 errors) or server-side 5xx errors. Both traditional search and AI bots cannot crawl pages that are unavailable or unreachable, effectively excluding them from being referenced by generative models.

Nosnippet issues for GEO

Are nosnippet tags preventing your content from appearing in AI responses?

In the era of GEO and AI search, the ability to control how your content is summarized is a powerful tool — or a critical issue if mismanaged.

The nosnippet directives (including the meta tag, X-Robots-Tag header, and data-nosnippet attribute) were originally designed to prevent search engines from creating rich text snippets in traditional search results.

Today, these same nosnippet directives are a direct signal to AI systems. By preventing a snippet from being generated, a nosnippet directive also prevents your content from being used as a direct input for AI-generated responses, AI Overviews, and other AI-powered SERP features.

The nosnippet meta tag is a crucial tool for GEO because Google has confirmed it prevents AI systems, including AI Overviews and AI Mode, from using a page’s content to generate AI-powered snippets and responses.

Per Google Search Central, the nosnippet directive tells Google:

“Do not show a text snippet or video preview in the search results for this page. A static image thumbnail (if available) may still be visible, when it results in a better user experience. This applies to all forms of search results (at Google: web search, Google Images, Discover, AI Overviews, AI Mode) and will also prevent the content from being used as a direct input for AI Overviews and AI Mode.”

And per Google Search Central’s documentation on appearing in Google AI features:

“Technical requirements for appearing in AI features:To be eligible to be shown as a supporting link in AI Overviews or AI Mode, a page must be indexed and eligible to be shown in Google Search with a snippet, fulfilling the Search technical requirements.”

Note: Lumar’s Nosnippet Reports for GEO are designed to help you audit these directives on your website, ensuring that your content is being used by AI systems exactly as intended — or, conversely, that a misplaced Nosnippet tag isn’t accidentally blocking your content from a valuable source for AI visibility.

JavaScript rendering issues for AI bots

What an AI bot “sees” is not always what a human sees — and may not even be what traditional search bots see. Content discrepancies between the raw HTML and the rendered page, which is processed after JavaScript is executed, can lead to a critical “content blind spot” for AI systems.

As Matt G. Southern reports in Search Engine Journal, while Googlebot is highly capable of rendering JavaScript, many other AI crawlers are not as advanced and still rely on the static HTML:

“Traditional search crawlers like Googlebot can read JavaScript and process changes made to a webpage after it loads … In contrast, many AI crawlers can’t read JavaScript and only see the raw HTML from the server. As a result, they miss dynamically added content…”

This creates a new layer of technical GEO to manage. If any of your key content — the headings, paragraphs, and data that provide content authority — is only loaded via JavaScript, your most valuable information may be invisible to AI platforms.

The challenge for GEO is to ensure that a website’s technical foundation is robust enough for all crawler bots (including AI crawlers), not just Google’s.

Note: Lumar’s AI Renderability reports are designed to help you diagnose and fix these JavaScript rendering issues, ensuring your content is seen and understood by the full spectrum of crawlers and AI bots.)

“I think AI is not a revolution in search; it’s just an extension. AI search strategies put more light on previously undervalued areas of SEO that some of us ignored or took for granted.

In some areas, it’s time to go back to basics, e.g., with rendering. Google told us for years that they manage to render pages well (I still have pretty large doubts), but now AI search crawlers again don’t render JavaScript, and we again need to optimize how we render content to make sure AI search crawlers can find it.

Making your site cheaper to both render and understand content is getting more and more important.”

— Patryk Wawok, Co-founder & Head of Technical SEO at Organic Hackers

Indexability & GEO

Website indexability issues (such as pages being non-indexable via robots.txt, incorrect use of canonical tags, or using the unavailable_after directive) don’t just impact your traditional SEO and SERP results — they also have a knock-on impact on AI search results and AI-generated answers.

As Barry Schwartz explains in Search Engine Land, “To show in Google AI Mode & AI Overviews, your page must be indexed.”

ChatGPT & Bing’s search index

If, previously, you were primarily concerned only with getting your content indexed by Google, it may be time to expand your scope here for GEO… because ChatGPT’s search function appears to rely heavily on Bing’s search index.

Per OpenAI’s announcement about ChatGPT Search:

“ChatGPT search leverages third-party search providers, as well as content provided directly by our partners, to provide the information users are looking for.”

And as Danny Goodwin writes in SEL, “. . . One of those third-party search providers is Microsoft Bing. Based on its ChatGPT search help document, OpenAI is sharing search query and location data with Microsoft.”

Structured data & GEO

How structured data may impact AI visibility:

While the core training of large language models (LLMs) used in AI bots primarily relies on vast quantities of unstructured data and text, many AI-powered search features (like Google’s AI Overviews) that synthesize information from existing search engine indices may also indirectly benefit from structured data. This is because structured data helps traditional search engines better understand, categorize, and surface relevant content, which in turn can improve the chances of that content being selected and leveraged by the AI for its generative responses.

LLMs are trained on massive datasets of text; predominantly unstructured human language (books, articles, web pages). Most AI systems’ “understanding” of context primarily comes from patterns in natural language, not from directly parsing and interpreting schema.org markup in the same way a search engine’s indexing system does.

But AI-powered search features from Google (like AI Overviews) and Microsoft (Copilot) do not re-crawl the web independently for every query. Instead, they leverage the vast, continuously updated indices of their respective search engines (Google Search Index, Bing Search Index). And structured data plays a crucial role in how today’s search engines understand, organize, and retrieve information from the web.

Copilot’s knowledge sources documentation, for example, indicates a direct reliance on the Bing search index:

“When turned on, Web Search triggers when a user’s question might benefit from information on the web. It searches all public websites indexed by Bing.”

And remember that Google Search Central documentation on appearing in AI features that we mentioned previously? It says:

“Technical requirements for appearing in AI features: To be eligible to be shown as a supporting link in AI Overviews or AI Mode, a page must be indexed and eligible to be shown in Google Search with a snippet, fulfilling the Search technical requirements. There are no additional technical requirements.”

If structured data helps enable traditional SERP snippets and some LLMs and AI search features rely on traditional search engine indices for up-todate information, then having proper structured data in place may also help your content appear in these AI-powered search features.

In short, while AI itself may not “read” structured data in a semantic way during its core training, structured data is vital for how normal search engines today ingest, understand, and organize information. Since AI platforms and AI SERP features rely on these organized search indices to retrieve the most current information, robust structured data implementation on your website may have a knock-on effect of improving the chances of your content being considered relevant and chosen as a source for AI-generated responses.

On Structured Data & Knowledge Graph Integration:

“Structured data is no longer only about getting rich results within Google’s search results. It’s the foundation for machines to make sense of their training data. As language models keep getting smarter, the winners are going to be the brands that treat their website’s content like data: interconnected, contextual, and queryable.

Schema markup, linked-up entities, and keeping your metadata consistent will aid LLMs in interpreting meaning rather than just words on a page.”

— Jonathan Clark, Managing Partner at Moving Traffic Media

A further consideration on GEO/AEO and structured data comes from Jarno van Driel’s interview on the Search With Candour podcast.

When asked, “With the growth of … natural language processing … do you think that shifts how important structured data is going forward?”, van Driel responded:

“The question is, is it financially viable? … The big advantage of structured data — any structured data set, it doesn’t necessarily have to be JSON-LD — the big advantage of those is that they really require little compute to be turned into something useful. And the biggest issue with all those LLMs, even the next generation five or ten years from now, is that it will cost a lot more compute to analyze unstructured content than to digest a piece of structured content.

So even if meaning or understanding are not involved, it’s just a matter of money. It’s cheaper for any party to deal with structured data than to turn unstructured data into structured data to be able to use it. So that’s really the biggest benefit; it’s just a cost-efficient way for those parties to do something with data. Knowledge graphs and those kinds of repositories wouldn’t function without those large [structured] datasets.”

Further learning: strategies to build your brand’s AI visibility

Here, we’ve covered the essentials of Technical GEO. In Lumar’s comprehensive GEO / AEO Strategy eBook, “Your AI Search Playbook,” you can learn tactics to cover the other 3 pillars of a strong AI search strategy, including:

- Content GEO

- Entity GEO

- Brand Authority GEO