Feature Release: Splunk API Log File Integration

We have an exciting announcement for Splunk users. Cue the latest DeepCrawl feature release!

, we’re introducing a brand new integration with Splunk for a more comprehensive view of your log files.

We believe in log file analysis. Log files are a window into the world of search engines as they show you exactly how search engine bots are accessing and handling your website. In order to harness this view, last year we integrated log file data into our platform through a manual uploads feature. Now we have taken this one step further and will be merging crawl data with Splunk log file summary data, so you can get more value out of each and every crawl.

What Is Splunk?

Splunk is one of the leading tools for analysing machine data in the industry. The tool provides invaluable insights into server log files and tracks all the events on a website. One of Splunk’s defining features is the scale of data it can process, so it is a reliable companion for enterprise businesses. Scalable machine data and a cloud-based website crawler are a match made in heaven, so we decided to join forces!

Combining Splunk Log File Data with Crawl Data

These are the key benefits of integrating Splunk within your DeepCrawl account:

- One dashboard with a final view of all URLs and requests

- Fresh Splunk log file data pulled through with every crawl

- No more manual uploads for your log files

- Get more value out of every crawl with an extra crawl source

- Combine log file data with other crawl sources (e.g. Google Analytics and Google Search Console) for a more holistic view of every URL

By combining crawl data with your Splunk log file data, you will be able to see the number of requests that your pages are receiving which shows alongside a wide variety of other metrics included in our crawls. This is especially useful when you run regular crawls, as you can see changes in response codes and the indexability of your pages on an ongoing basis and how this will be affecting both bot and user requests. If your crawls are scheduled more frequently than the search engines visit your pages you will even be ahead of time, and will be able to implement fixes to your pages before the search engines get to them!

How Can I Add Splunk Data to My Crawl?

This feature is available for anyone with a Splunk Enterprise or Splunk Cloud account. Simply get in touch with your DeepCrawl account manager from our Customer Success team, and they will help you out with the next steps of the integration setup.

If you don’t have a dedicated DeepCrawl account manager but would like to integrate your Splunk account with us, then please get in touch.

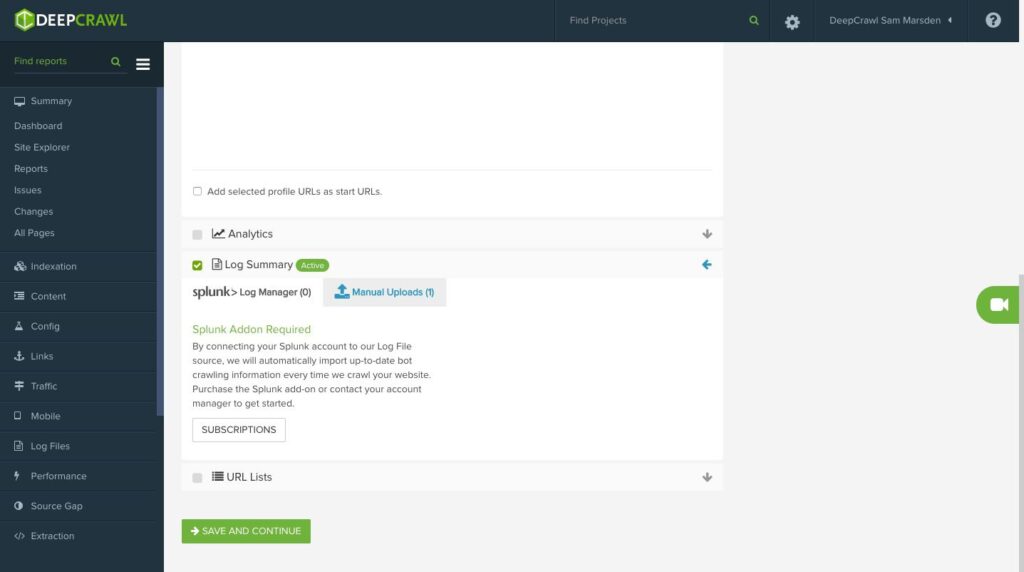

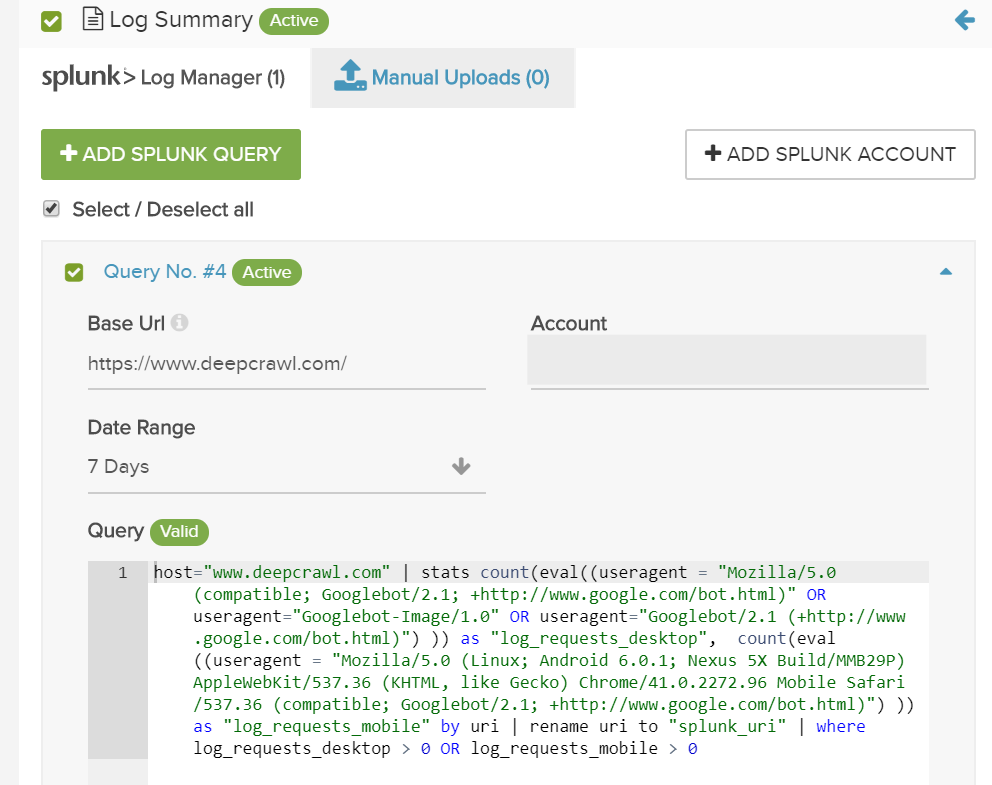

You will find the settings for the Splunk integration within the ‘Log Summary’ section of the crawl source settings. Here you can review and configure the setup, and this is where the queries for Splunk will be contained.

We Will Help with the Setup

Linking your DeepCrawl project with your Splunk account won’t be a one-click process like our other integrations, but not to worry, we will do the setup for you. Every log file format is slightly different, and writing queries to get the right information can get tricky. This means we will work with you to get the best query for your logs.

If you let your account manager or another member of the DeepCrawl team know that you want to integrate Splunk, we will do the rest. Get in touch if you need any extra assistance with your Splunk integration or have any further questions.

What Data Will You Be Seeing from Splunk?

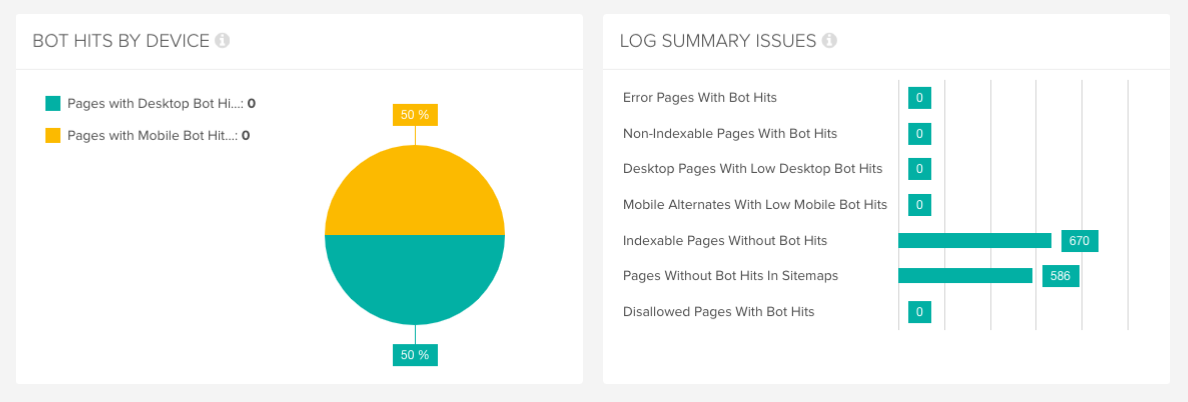

When Splunk has been integrated, your crawl will return your site’s URLs along with the number of requests and bot hits. This data will feed into the log file reports we provide, including:

- Error pages with bot hits

- Non-indexable pages with bot hits

- Indexable pages without bot hits

- Mobile alternates with low mobile bot hits

- Pages without bot hits in sitemaps

And more!

Problems You Can Solve with the Splunk Integration

Integrating your Splunk log file data with crawl insights will enable you to optimise the journey that a search engine bot takes to crawl your site. You can see where they are making requests on your site where they had difficulties getting to pages that add value to your site, and where they found a way through to pages that add no value that you would rather keep out of reach.

Take a look at our guide on getting more from your underutilized log file data to get actionable insights for improving the technical health of your website.

Want to find out more about how to integrate your data? You can read our product guide or contact us today.