Feature Release: DeepCrawl’s JavaScript Rendered Crawling

We are excited to announce the launch of JavaScript Rendered Crawling with DeepCrawl. Our latest feature vastly strengthens DeepCrawl’s crawling capabilities allowing you to crawl the rendered version of pages on sites that use JavaScript to modify their content.

Why is Rendered Crawling Important?

More and more websites are relying on JavaScript, so search engines have improved their page rendering functionality, making JavaScript testing an increasingly important part of technical SEO. In light of the progress search engines have made in crawling and rendering JavaScript, it is essential that you know if Google is able to crawl and index the rendered version of pages as well as the HTML version.

Enter DeepCrawl.

We have enhanced our market-leading crawler to allow you to understand the technical health of pages that use JavaScript to inject content and links into the DOM.

By enabling our latest feature you can:

- Find out if content and links modified by JavaScript are rendered correctly for search engines to index.

- Find out how crawlable your pages are with and without JavaScript rendering enabled.

- Detect JavaScript URL changes including redirects, meta redirects, JS location.

- Cache resources found in a crawl so that your server is not overloaded.

Crawling JavaScript Sites With DeepCrawl

With our latest release, DeepCrawl users can now enable JavaScript rendering to analyse the rendered version of pages on their site.

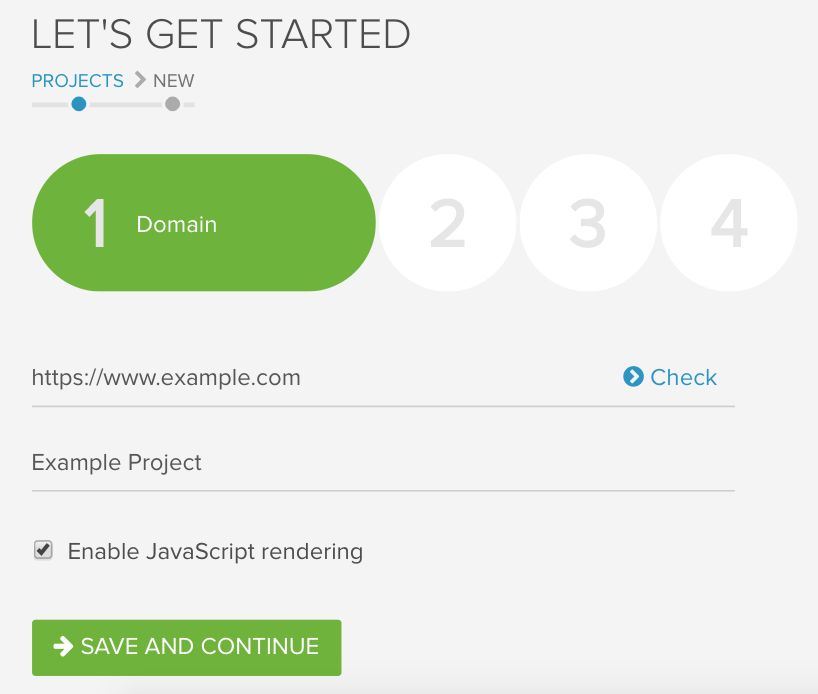

Here’s how you can get going with DeepCrawl’s new rendering capabilities:

- Before the crawl

- Set up a new crawl and enable JavaScript Rendering in the first stage of the setup, making sure to add in any custom requirements in Advanced Settings.

- During the crawl

- Pages which inject links and content into the DOM will be crawled and the rendered DOM will be parsed.

- After the crawl

- Once the crawl has completed you will be able to view all of DeepCrawl’s reports based on the rendered version of the page for all of the URL sources you’ve uploaded to the crawl.

The Nuts and Bolts of Our Latest Release

Before you go and set off a crawl with JavaScript rendering for yourself, here are some handy details about our latest update:

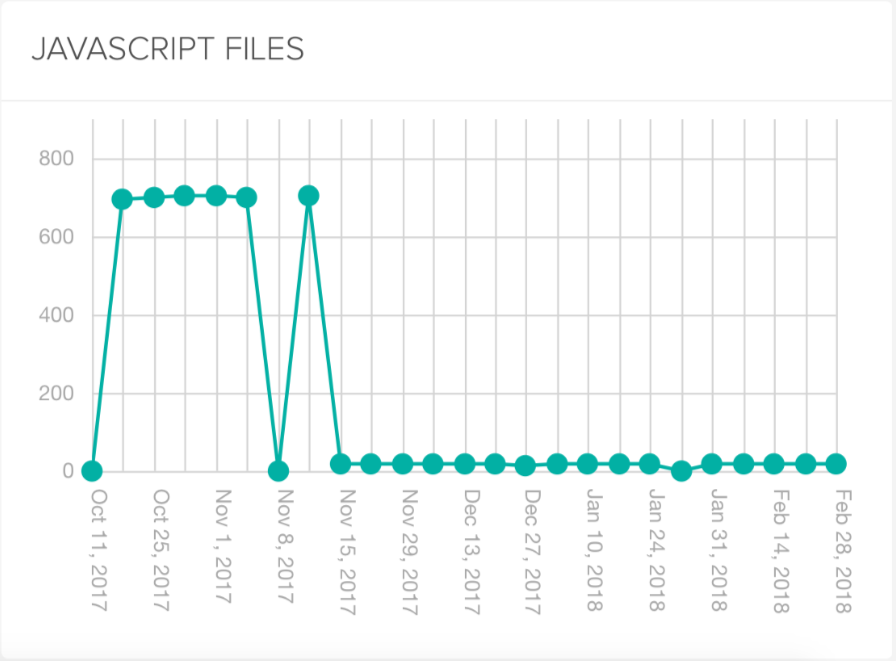

Find Out How Pages Compare Before and After Rendering

While JavaScript Rendering is our shiny new feature, it is still worth crawling with and without it enabled as it’s good to know and compare what is returned before and after rendering.

It’s important to have an understanding of a site before it has been rendered as there is a delay before Google indexes the rendered version of a page, first indexing the HTML version. While Google is working to reduce this delay between indexing two versions, making body content available pre-rendering is recommended especially for sites with time-sensitive content.

Rendering Timeouts That Reflect What Search Engines See

While rendering a page, DeepCrawl uses a default timeout of about 10 seconds after which time the current DOM is used. This timeout length is comparable to search engines, highlighting slow pages which take too long for search engines to render the content for.

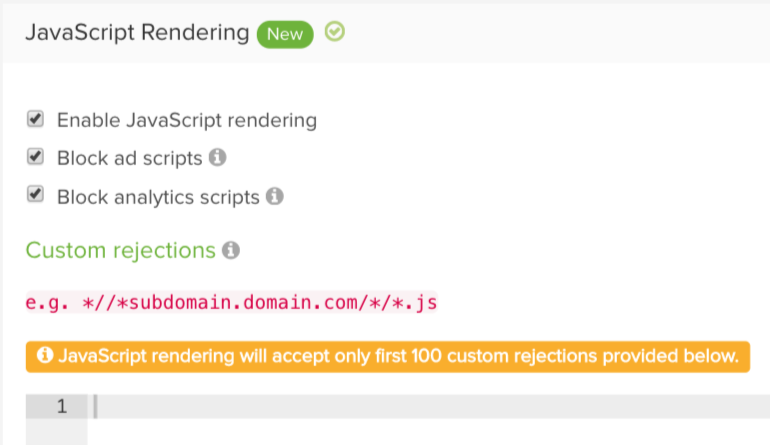

Ad Tracking and Analytics Scripts Blocked by Default

Crawling with JavaScript Rendering enabled won’t cause any analytics or ad serving resources to be triggered. We use a predefined list of tracking scripts which are blocked by default to ensure crawling doesn’t result in unwanted ad or analytics impressions. As a secondary control you have the option of blocking any other resources by matching URL paths in the Custom Rejections field found in the Advanced Settings.

Resource Caching Avoids Overloading Servers

As part of our rendering we will cache the resources encountered during a crawl so that your server won’t be overloaded by individual rendering servers requesting the same resources constantly.

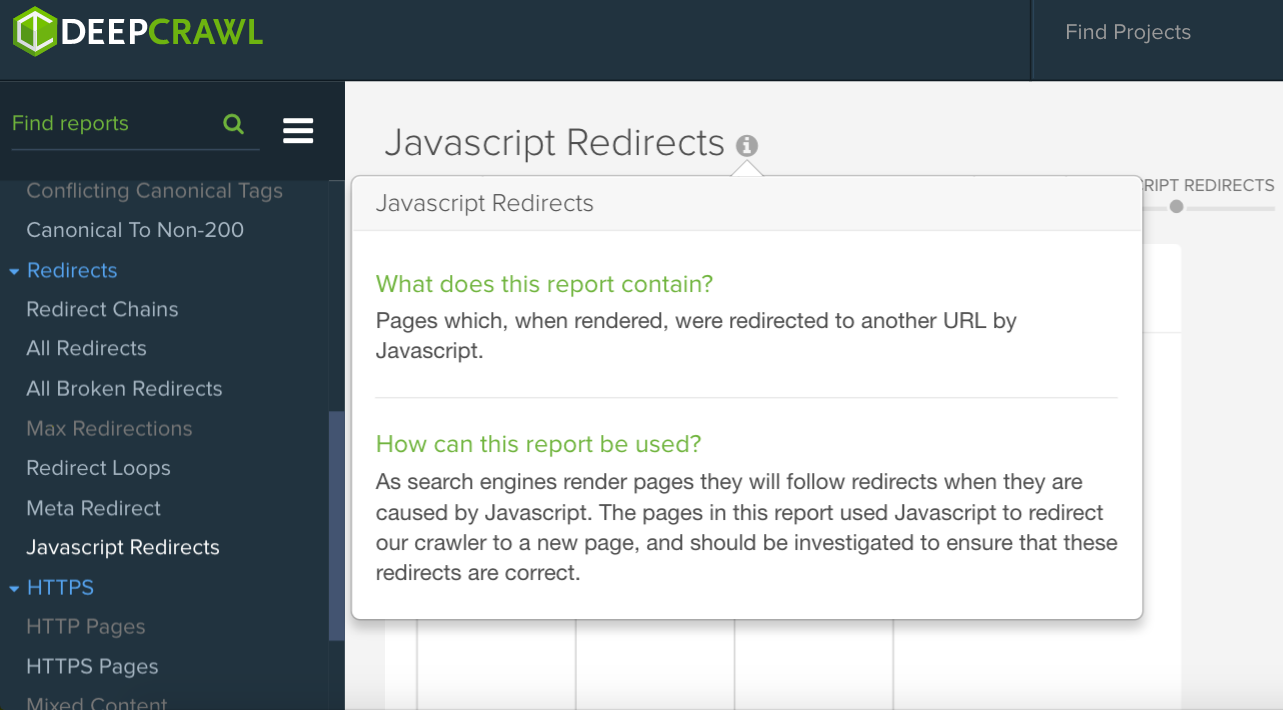

Identify JavaScript Redirects

Our rendering update shows when JavaScript redirects have occurred, so you can find pages which are redirecting users.

How Can I Take Advantage of JavaScript Rendered Crawling?

New to DeepCrawl? Take a look at our packages making sure to add JavaScript Rendering as an Add-on during the sign up process to experience the power of our unparalleled crawling capabilities.

Already a DeepCrawl fangirl/boy? Log in to the DeepCrawl app and head over to the Subscription section of your account and select JavaScript Rendering as an add-on to your account.

If you have any questions don’t hesitate to send us a message or you can contact your customer success representative directly.

Also, if you want to learn more about JavaScript & SEO, you can do so by watching our recent webinar with industry expert Bartosz Goralewicz.