All websites have their own ‘churn and change’ frequency: a homepage might change once a month, a blog might update once or twice a week and news sites change multiple times an hour.

Similarly, your content is either ‘evergreen’ (ie. it will continue to be useful for the life of the page) or it has an expiration date. Typical content that ‘expires’ includes:

- News stories, where an update is published on a separate URL

- Pages for products that sell out and are not replenished

- Event listings that expire after the event date

- Listing pages (search results/category pages) that change from having content to being empty.

In this post, we’ll explore two options for dealing with this type of content as an SEO:

Redirect to a relevant alternative (301)

OR

Design the friendliest page + relevant status code (200/404/410)

If there is a new or equivalent version available:

If your old page no longer has any on-site value, you could 301 to a new version. If you choose to reactivate the page later you can remove the redirect and the page should be re-indexed within a few Google crawls.

However, 301s should be managed in accordance with best practice guidelines if there are going to be further versions published – see ‘beware redirect chains’ below.

Alternatively you could link the old version to the new version. This involves less technical work, fewer redirects to manage and, where it might be useful, keeps the old information available to look at. Linking to updates can be managed via manual contextual links or using tags that will control the related content within the content template. This is probably the best option for news stories or blog posts, where the older content is still useful for readers looking for a timeline of a developing story.

Beware redirect chains

For example, don’t redirect from product A to product B, and then redirect again from product B to product C when that is released. This can cause delays for your users and eventually will cause problems for search performance since Google will only follow four or five redirects in the same chain, as well as reducing crawl efficiency.

Find more information on managing redirects in our guide here.

If there is no new version available:

Keep the page active and add related content to it to keep users within the site. Think about other pages you can link to that will give the user what they wanted in the first place without having to confuse them with a redirect. Keep an eye on bounce rates on these pages to ensure your additions are serving their intended purpose.

404 on the same URL and it might take a few crawls to be removed from search results. It can be reactivated later and will be recrawled.

410 on the same URL and the page will immediately be de-indexed. It can be reactivated later and will be recrawled.

See this Google+ post by John Mueller for more information on reindexing pages that were previously 404 or 410.

Special Sitemaps for expired pages

You can submit an XML Sitemap with expired pages to help get them removed from the index quicker. It’s best to put them into a separate Sitemap so you can separate them from other indexable URLs. John Mueller discussed this further in a Google hangout in 2014.

Do large numbers of 404s, 410s or 301s cause a penalty?

There is no penalty for large numbers of 404s, 410s or 301s. Gary Illyes from Google attempted to dispel this myth recently on Twitter and John Mueller also discussed it in a Google+ post in 2013.

Whoever came up with the idea that having 404s gives a site any sort of penalty, you’re wrong. Utterly wrong.

— Gary Illyes (@methode) August 6, 2015

Large numbers of 301 redirects and expired/low value URLs that 404 won’t directly cause any general ranking problems for the site (assuming you’re doing them properly).

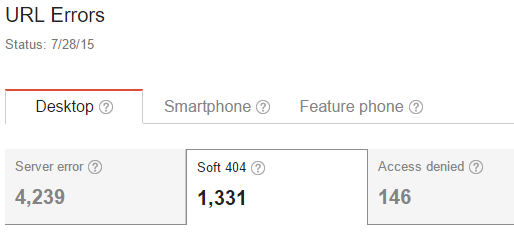

Soft 404s

Google is actively looking for pages that are not returning a 404 status, but appear to be some kind of expired, or thin content page which no long has unique and valuable content. Read Google’s guide to soft 404s here.

Any pages that are regarded as soft 404s will show in Google Search Console (under Crawl > Crawl Errors):

This can sometimes be a configuration error, but if not you may want to try to extend the performance window of expired pages by generating a high quality ‘soft 404’ page.

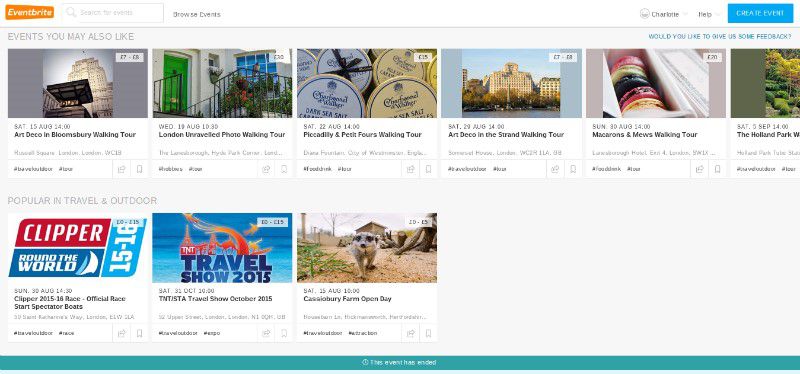

For example, Eventbrite show a banner of upcoming related events on an expired event page: this tactic could also apply to similar products or listings.

Managing expired pages with DeepCrawl: useful reports

Crawl type: Universal or List

There are two ways to find expired URLs to see how they behave in DeepCrawl:

- Include organic landing pages in a Universal Crawl to find pages that were previously getting traffic but that have now expired.

- If you have a list of pages that you know have expired, run a List Crawl targeting just those URLs to see how they behave.

Unique Pages, Duplicate Pages and Paginated Pages

Your list of expired pages could show as unique, duplicates or paginated: the relevant reports under Indexation > Indexable Pages can show you if they appear how you expect them to.

Different versions of the same product or event should show as duplicate pages under Indexation > Indexable Pages > Duplicate Pages. The older versions can then be 301 redirected to the most up-to-date version.

The same report is also useful for spotting any Soft 404 pages that have creeped in, since Soft 404s should all look the same at the time of the crawl, even if they did not have the same content in their original state.

301 Redirects / Non-301 Redirects and 4xx Errors

Expired pages which 404, 410 or 301, 302 redirect will show up in the relevant reports under Indexation > Non-200 Status.

Noindex Pages or Canonicalized Pages

Pages which are noindexed or canonicalized will show under Indexation > Non-Indexable Pages.