Watch the full GEO webinar session here:

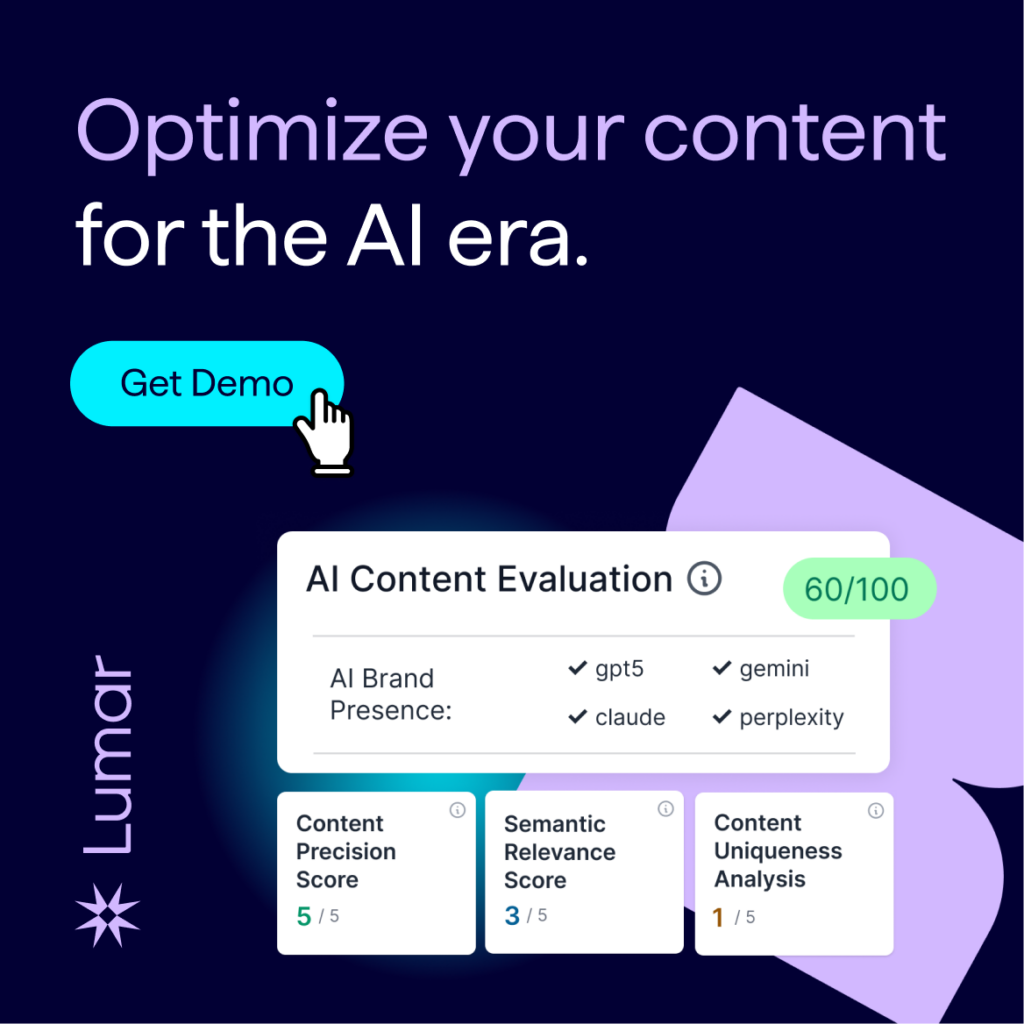

Content Optimization Tools to Build AI Search Visibility

Your content ranks in traditional search. Your brand is established. So why isn’t ChatGPT citing you?

Watch this webinar to explore new Lumar GEO/AEO tools that show you exactly what AI systems see when they evaluate your content — and what you need to fix to get cited by AI.

Ali Habibzadeh, Chief Technology Officer at Lumar, and Head of Product Matt Ford demonstrate content precision scoring, AI sentiment analysis, competitive uniqueness reports, and the EEAT signals that determine whether generative engines trust your brand enough to recommend it.

Watch the full webinar above – including audience poll results and Q&A session — or read on for the top takeaways.

How to evaluate & optimize your content for AI visibility: key takeaways from Lumar’s GEO / AEO tools webinar

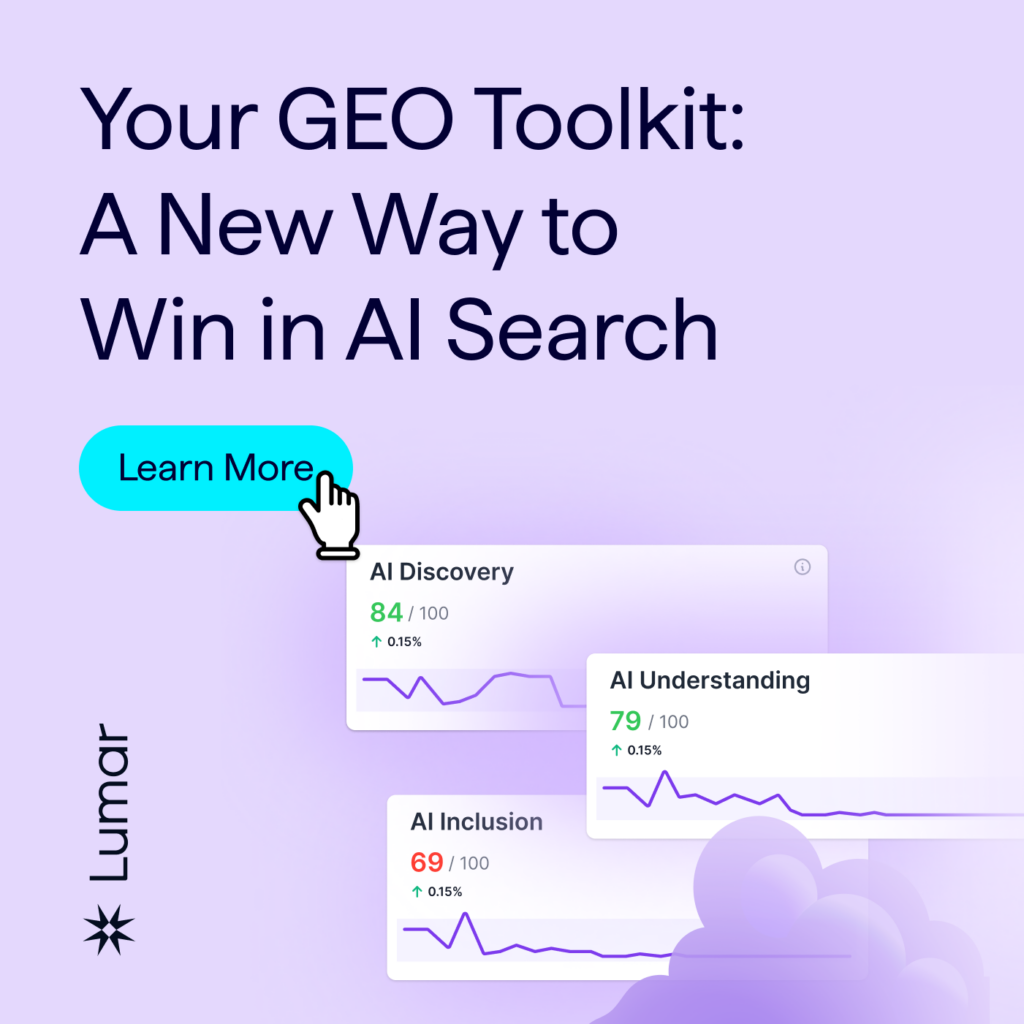

Take a deep dive into Lumar’s AI Content Intelligence Suite to learn how you can systematically evaluate how AI models interpret your content — and how to optimize for stronger AI brand visibility, authority, and citations.

While many teams are experimenting with AI chatbots, far fewer have implemented a structured way to evaluate how their content is processed, chunked, retrieved, judged, and selected for use by large language models.

“There is no single guaranteed way to control how models cite your brand,” says Ali Habibzadeh, Chief Technology Officer at Lumar, “But there are reliable approaches that are founded in somewhat traditional practices like EEAT [Experience, Expertise, Authority, & Trustworthiness, a common ‘best practice’ in SEO content creation].”

“The most reliable approach is content analysis governance that continuously monitors your brand as it appears [in AI answers], flags misalignments, and enforces consistency over time. So you can exert influence over the training data, whether it comes from your website or from [other] content online.”

Habibzadeh reminds us that, when it comes to evaluating your content for Generative Engine Optimization (GEO), we need to remember that:

- LLMs break content into ‘chunks’ at about 300 or 400 tokens.

- These chunks are embedded in the vector space.

- AI retrieves from the vector space content that is semantically most relevant to the user’s query.

According to Habibzadeh, this means that:

- Unfocused content gets ignored.

- Incomplete content gets skipped

- Unoriginal content loses to whoever said it first.

“So what we have built is a set of specialized agents using ‘LLM-in-the-loop’ which means two or more LLMs with specific instructions work iteratively together in order to analyze the content of your website and evaluate the way AI systems actually perceive it,” he explains, of Lumar’s GEO Content Evaluator tools.

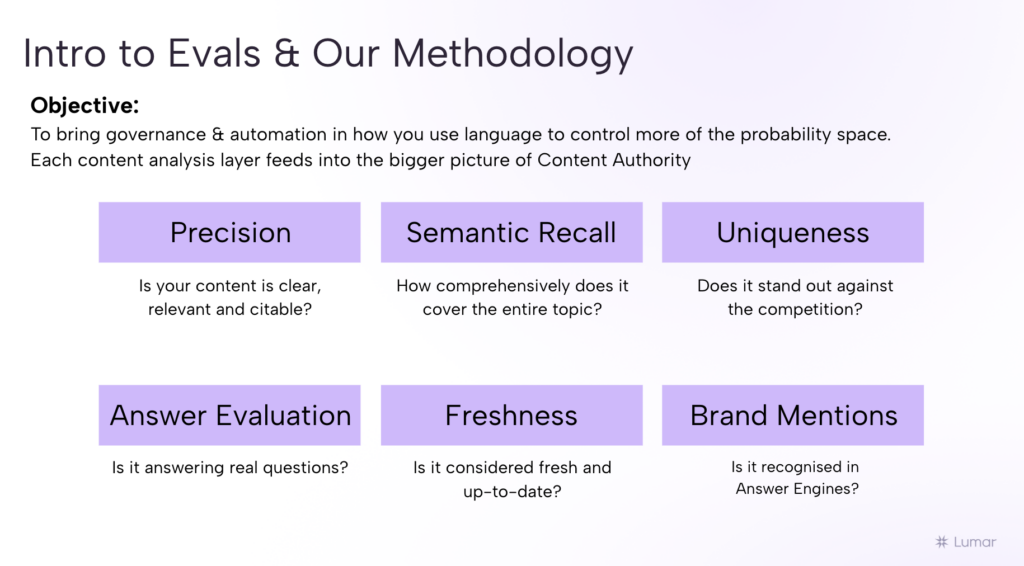

Analyzing your content across 6 dimensions with Lumar

Using Lumar’s “Content Eval” tools, you’ll have access to a powerful suite of AI agents that analyze your content across 6 core dimensions for Generative Engine Optimization (GEO, also known as Answer Engine Optimization, or AEO):

- Precision – is your content clear, relevant, and citable?

- Semantic Recall – how comprehensively does it cover its topic?

- Uniqueness – does it stand out against the competition?

- Answer Evaluation – is it answering real questions that users have?

- Freshness – is it considered fresh and up to date?

- Brand Mentions – is your brand’s content getting cited by AI chatbots / LLM answer engines?

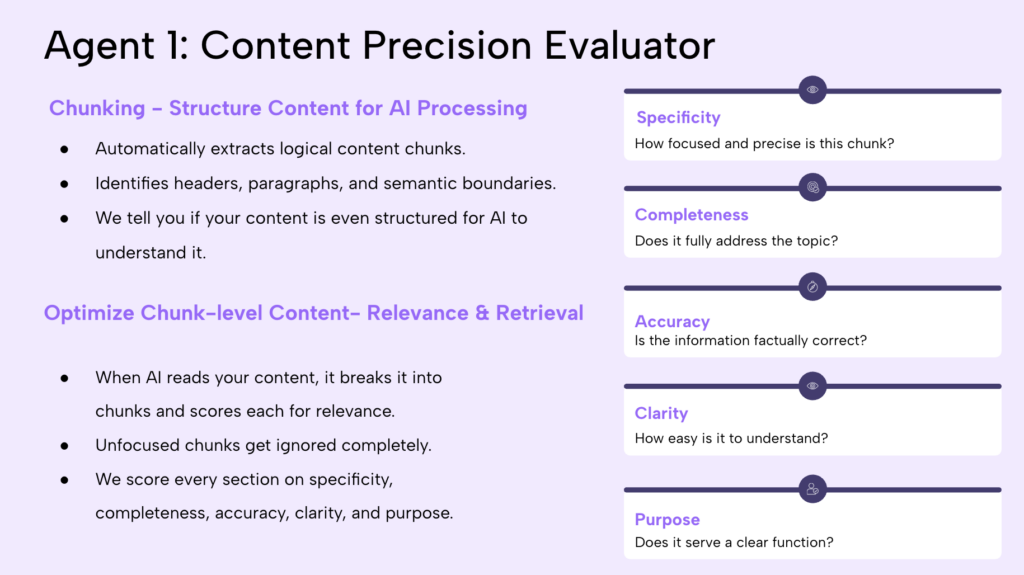

1. Content Precision Evaluation: Are Your Content Chunks Clear, Complete, and Focused?

The first Lumar content evaluation tool Ford and Habibzadeh demonstrate here is our Content Precision Evaluator.

Habibzadeh describes how it works:

“We divide the page into logical chunks… and assess each chunk based on specificity, completeness, accuracy, clarity, and intent.”

Each chunk is scored based on how essential it is to the page’s intended concept and how well it supports that concept.

Ford then showed how this content precision scoring feature looks inside the Lumar platform, highlighting how teams can:

- Sort and filter pages by precision score

- Identify weak or tangential sections

- Generate targeted recommendations for editorial improvements

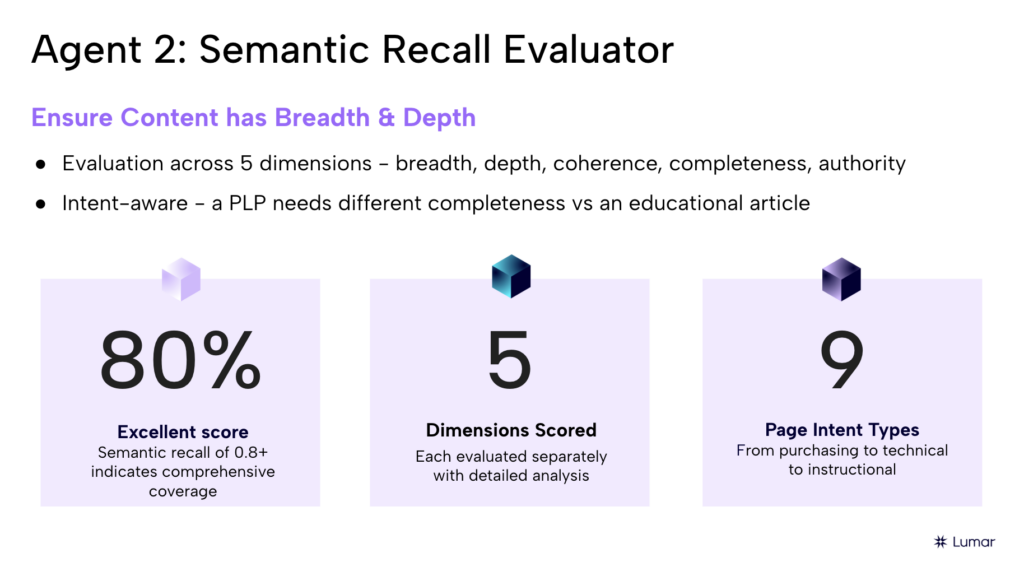

2. Semantic Recall Evaluation: Measuring Topical Authority Through Coverage

Content precision alone isn’t enough to reliably have your content selected by AI bots for delivery in their responses to user queries — much like in EEAT principles, authority on a given topic matters here, too. Content authority comes from comprehensive topic coverage.

Habibzadeh explained the goal of Lumar’s Semantic Recall evaluator agent:

“LLMs answer from comprehensive sources. Partial coverage [of a given topic] weakens the matches.”

This evaluator builds a knowledge tree for a topic and compares it against the page’s content to identify missing subtopics. As Habibzadeh explains:

“Whatever is missing [from the page] is presented back to you — topics you should have been able to cover in order to cover the breadth of the topic.”

This tool helps teams uncover content gaps such as missing examples, case studies, or compliance details — all critical for being perceived by AI bots as a complete, authoritative, and cite-worthy source.

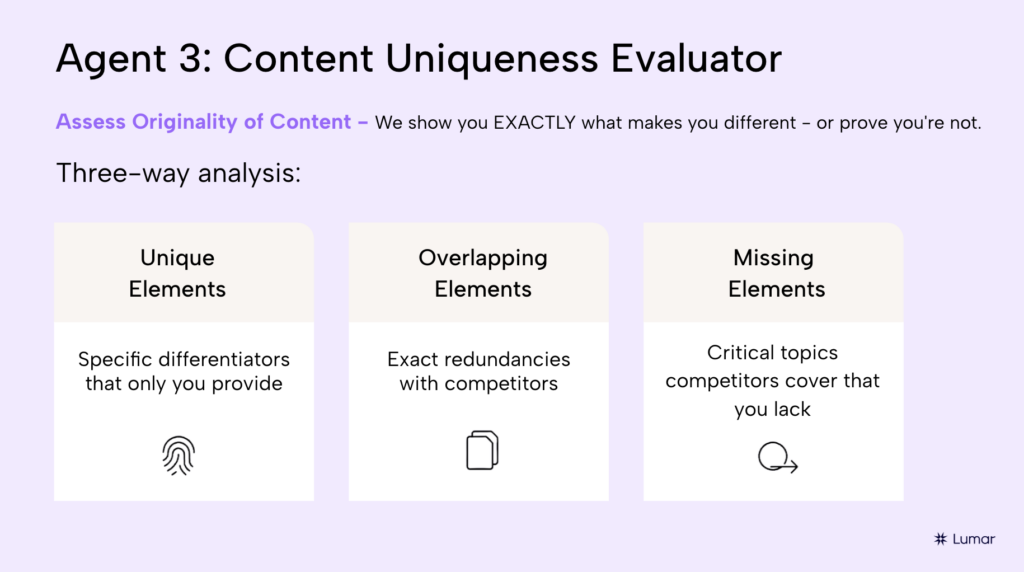

3. Content Uniqueness: Standing Out from the Crowd for AI Retrieval

Next, the presenters tackled a common pitfall: content sameness.

“Originality really matters [for GEO],” says Habibzadeh, “LLMs identify highly similar semantic patterns, and repetitive content is harder to distinguish.”

Lumar’s Content Uniqueness evaluator compares a page against top-ranking competitors by:

- Identifying unique concepts that your content covers

- Highlighting overlapping themes

- Surfacing missing concepts in your content that competitors are including

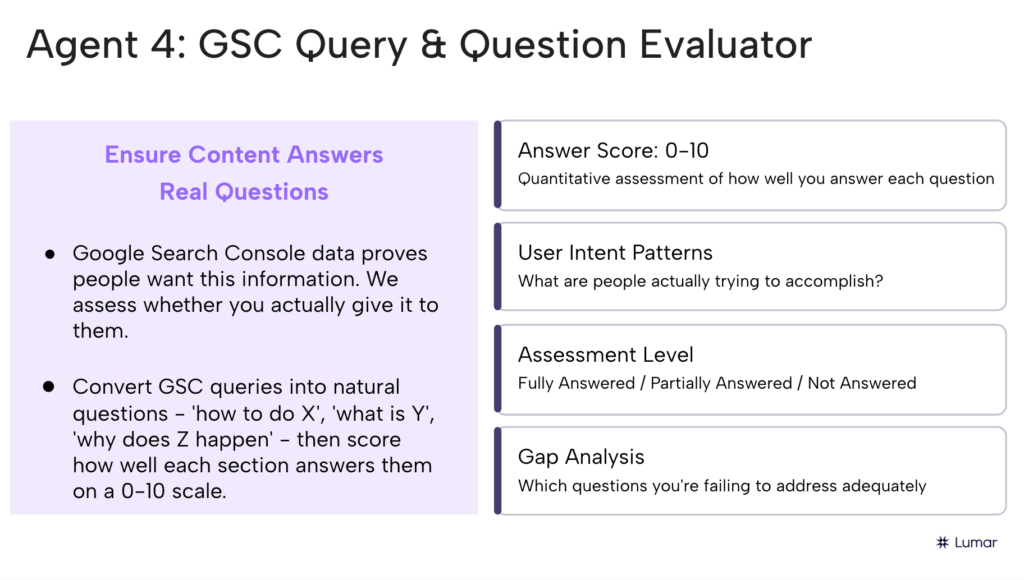

4. Query & Question Evaluation: Does Your Content Answer Actual User Questions?

AI systems are inherently question-driven — and so are users.

Habibzadeh explained how Lumar’s GEO Content Tools connects your real Google Search Console data to AI-style prompts:

“We take the most-clicked GSC queries… transform that query into a natural-sounding question… and assess whether it’s fully answered [by your content].”

Each question is then scored against your content as:

- Fully answered

- Partially answered

- Not answered

“These are [representative of] real user queries,” explains Ford, “It’s not speculation — it’s what real people are asking [based on your GSC data].”

This allows teams to prioritize improvements based on actual user demand for information, not assumptions.

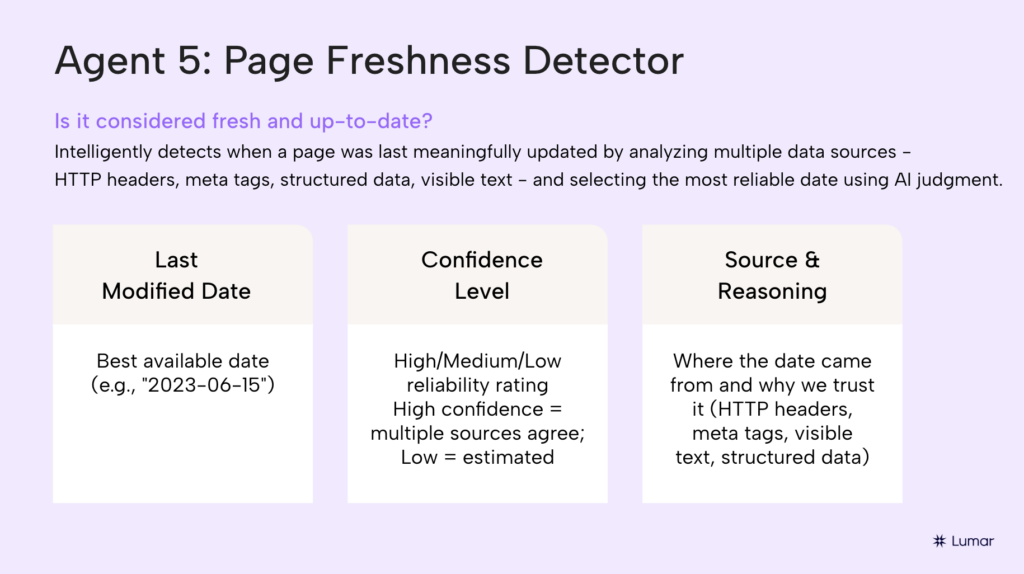

5. Page Freshness Evaluation: Knowing What Content to Update — and When

With thousands of URLs to manage, prioritization of which you will tackle first in your GEO plan is critical.

Habibzadeh explained Lumar’s Page Freshness methodology, which blends:

- Topic trend analysis

- Query Deserves Freshness (QDF) evaluation

- Page modification dates

“This is a powerful way of knowing which pages to tackle first if you don’t have the bandwidth to do them all,” he explains.

Some pages likely demand constant updates, while others remain evergreen.

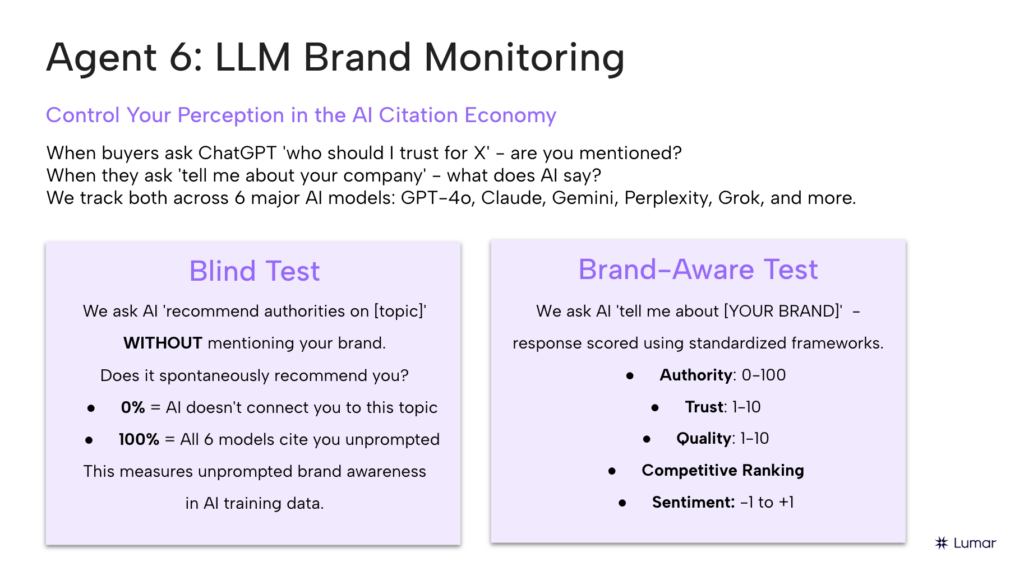

6. LLM Brand Monitoring: Hearing It “From the Horse’s Mouth”

Finally, Habibzadeh and Ford delve into how Lumar helps you monitor your brand’s presence, entity associations, and sentiment across the major AI platforms.

This includes ‘blind’ prompting, to help reduce biased prompting that might artificially inflate the results. As Habibzadeh explains, ““We ask the LLM — without mentioning your brand — what are the top websites and brands it associates with this topic.”

Lumar measures:

- Whether your brand is mentioned by major AI platforms

- Whether it’s cited

- Where it appears in the list compared to others

The platform then runs a brand-aware test to assess authority, trust, sentiment, and competitive positioning for your brand among the different AI models’ outputs: “This helps you understand how a model thinks of you specifically.”

Ford also highlighted brand monitoring features specifically built to cover Google AI Overviews and AI Mode, showing where brands appear in these Google SERP features, as well as in major chatbot responses from the likes of Gemini, ChatGPT, Claude, or Perplexity.

What’s Coming Next for Lumar GEO Tools

The webinar closed with a look ahead for Lumar’s ever-evolving suite of GEO/AEO tools for brands that take appearing in AI search seriously.

Ford explained that AI perception isn’t shaped by on-site content alone: “There’s a whole world outside of your web space that’s influencing [AI’s] knowledge and sentiment perception of your brand.”

Upcoming Lumar GEO capabilities include:

- Social listening and off-site brand research

- Prompt-level AI visibility tracking

- Topic clustering tied directly to crawl data

The Lumar GEO tools are designed to give teams a full GEO command center, combining both content and technical GEO insights to help brands improve their chances of success when it comes to being a trusted, reliable source that is regularly cited and mentioned favorably by AI platforms.

As Matt summarized: “The purpose of this methodology is to provide you with the tools to control this probability space.”

By aligning your brand’s content with how AI systems actually evaluate, retrieve, and cite information, Lumar’s GEO Content Intelligence Suite gives brands a scalable way to earn visibility, authority, and trust in AI-powered search.

Book your Lumar GEO Content Intelligence Suite demo here.

Don’t miss the next Lumar webinar!

Sign up for our newsletter below to be alerted about upcoming webinars, or give us a follow on LinkedIn to stay up-to-date with all the latest news in SEO, GEO, and digital optimization.

Want even more SEO insights on-demand? Browse Lumar’s full library of SEO and website optimization webinars here.