Friday 15th September saw BrightonSEO return for its second outing of the year. The day was packed with SEO knowledge from all speakers across the six tracks. In case you spent too much time drinking free beer in the DeepCrawl Beer Garden, here’s a recap of some of the talks throughout the day.

Once you’ve finished this recap, make sure to check out our Keynote Recap with Gary Illyes.

Table football, beer, charging points, free caffeine. And good job on the garden @DeepCrawl Ready to brush up on #onlinePR 1st #BrightonSEO pic.twitter.com/aMgOw0Px2w

— Shake It Up Creative (@ShakeItCreative) September 15, 2017

Bartosz Góralewicz – Can Google properly crawl and index JavaScript? SEO experiments – results and findings.

Talk Summary

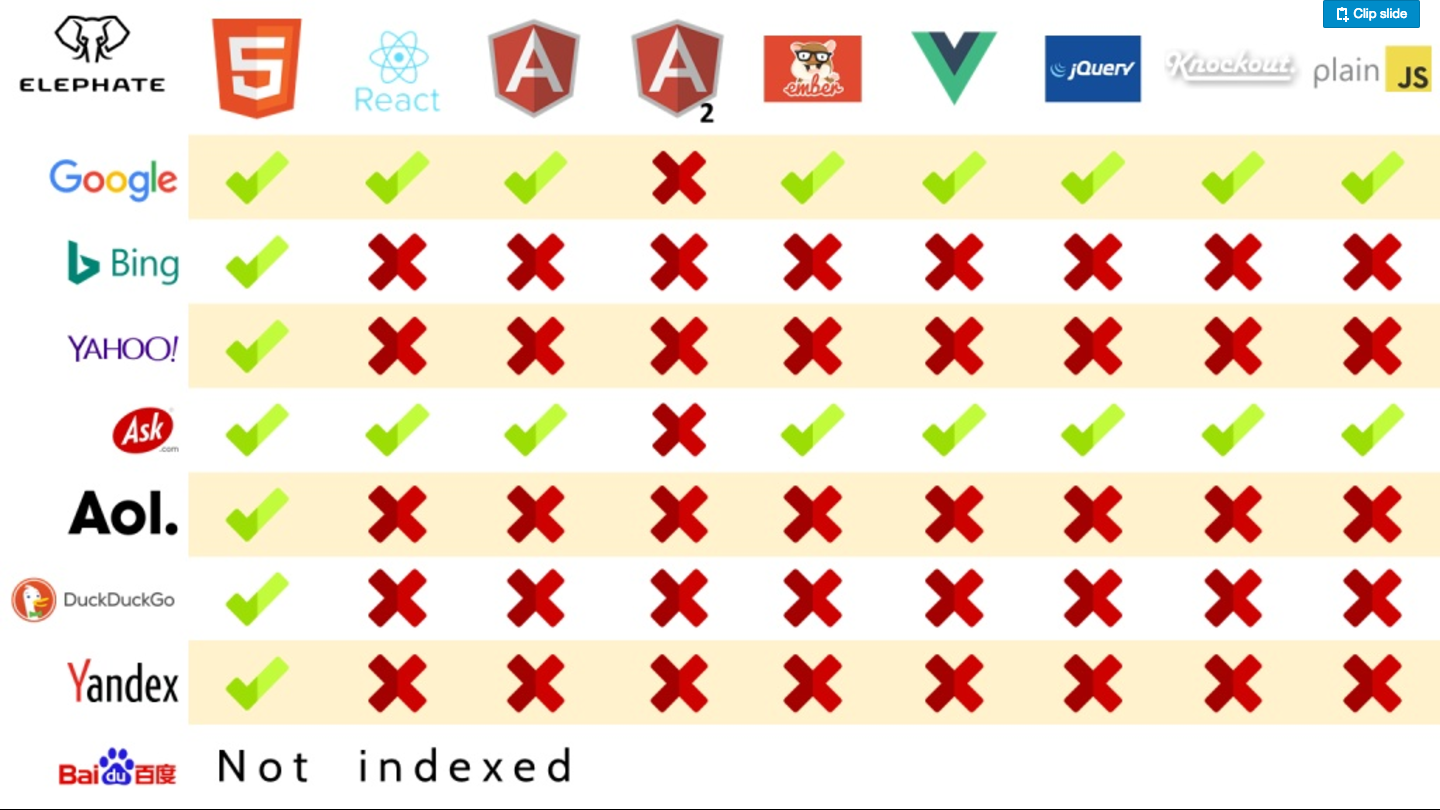

The session covered different JavaScript frameworks, which are becoming increasingly widespread, and the results of Bartosz’s experiments investigating the crawlability and indexability of these by search engines.

Key Takeaways

What is the problem with JavaScript?

- HTML is already processed by search engines but JavaScript needs to be processed so that it is SEO friendly.

- There are many different frameworks and JavaScript isn’t as forgiving as HTML.

Developers often point to this Google article that confirms it’s ok to use JavaScript.

- The post says: “Today, as long as you’re not blocking Googlebot from crawling your JavaScript or CSS files, we are generally able to render and understand your web pages like modern browsers.”

- However, sites like Hulu lost most of their traffic across many keywords when they switched to JavaScript sites.

Bartosz wanted to conduct experiments to see how Googlebot crawls Javascript – the two have a difficult relationship.

- Created Jsseo.expert – every page on the site is made with a different JavaScript framework and configuration.

- Then Articoolo (a machine learning tool) which was used to populate the pages with content.

- If JavaScript was parsed properly the text should show up on the page. If this box is empty it means there was a problem and JavaScript was not parsed as expected.

- Bartosz then added a /test/ URL to see if the content was processed but only 5 of 12 frameworks were crawled by Google.

You can see the full results of the experiment here:

Main finding: Not all frameworks crawled and indexed in the same way.

Bartosz then looked at other search engines; only Google and Ask are processing JavaScript – even simple JavaScript.

Ilya Grigorik at Google said – If you fix underlying SEO errors there is no practical difference and you can run Chrome 41 to see URLs just like Googlebot.

John Mueller has said Isomorphic JavaScript is ideal; which is complicated and needs good JS developer.

Google is not excited about JavaScript and it can kill your crawl budget.

- Another issue is that most link indexes can’t really crawl JavaScript.

Bartosz has created a new site (early beta) where you can’t visit pages without visiting the previous one. Google went one page deep with JavaScript pages but six deep with HTML.

Emily Grossman – PWAs and New JS frameworks for Mobile

Talk Summary

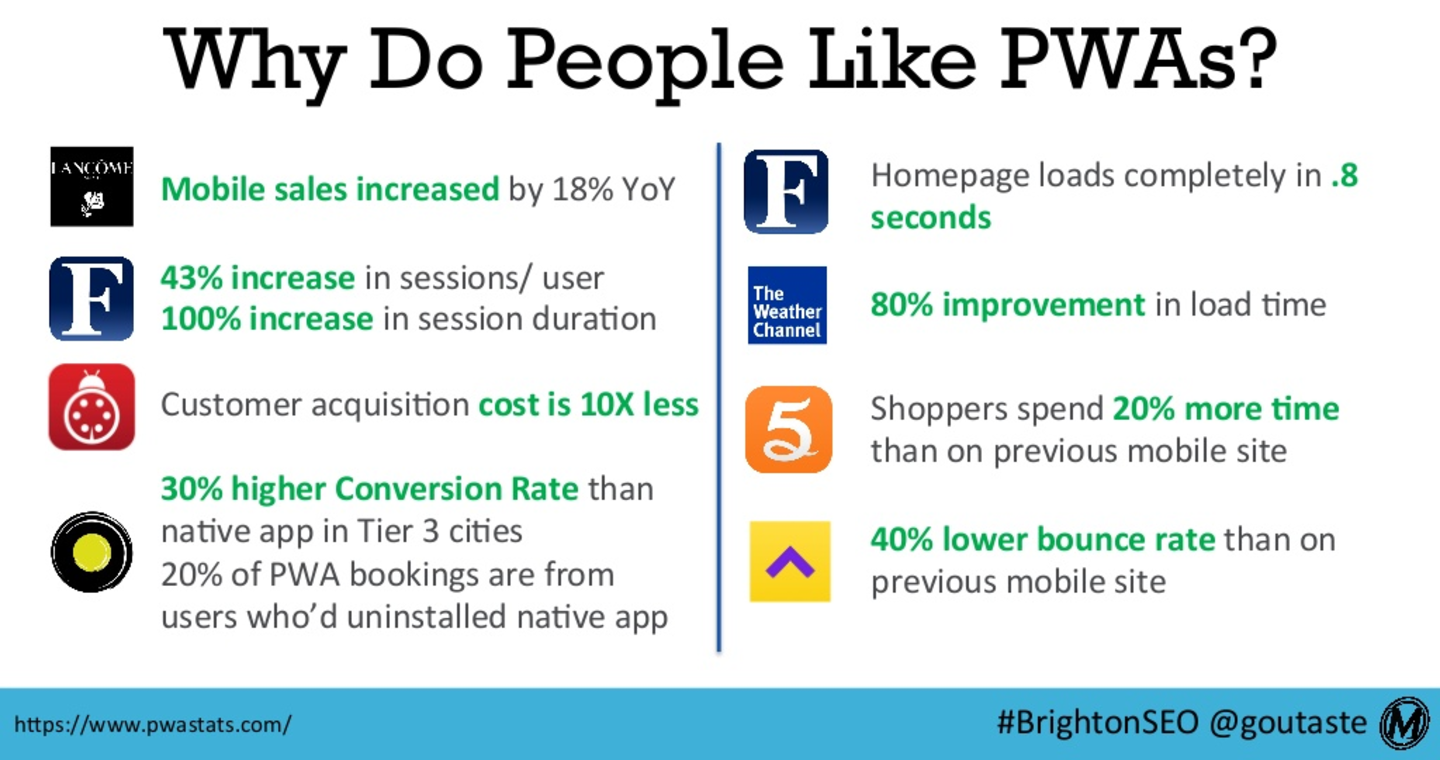

Emily’s talk covered the requirements to build Progressive Web Apps (PWAs) and many of the key considerations and technical complexities to be taken into account around perceived page speed, performance and support for old browsers. Emily also walked through some useful tactics to make sure PWAs are indexable, and optimised for mobile devices.

Key Takeaways

JavaScript enabled web has allowed for the creation of full web experiences only available to native apps previously.

- The app-like web – PWAs have changed this.

- PWAs can work offline – so never have to see Chrome dino again.

- Lots of benefits for traffic, conversion rates and bottom line and have benefitted many companies.

The only requirements for a PWA are:

- App Manifest that links to things like icons, can inspect; in chrome dev tools.

- Service worker – like air traffic controllers, intercepts requests, makes decisions around caching. Resource efficient.

- HTTPs is mandatory.

That’s all that’s needed.

Some frameworks make it easy to create web apps – Command Line Interface’s like Vue, React and Preeact

Speed and performance optimisation tips

- The trade-off is adding some bloat to code. Be aware of code bloat of framework. Vue was quickest 1.5s

- The benefit is that they speed things up for repeat visits due to caching

Here are Emily’s tips to optimise a PWA:

- Set up Service Worker to cache essential assets.

- Optimise initial download – Fast First Paint (FFP) is essential metric.

- Consider trade offs with Time to Interactive (TTI).

- Track time between FFP and TTI (when users feel like they can do something on your site – essential for conversion rates).

- Can optimise TTI with code splitting.

- Prefetch – can get resources for code that hasn’t loaded – pre-empting next page they’re going to visit. There are tools that try to predict this.

- Make site feel like it’s faster by using progress bars.

- Load on mouse down events – can save 150ms.

Support for old browsers

- Polyfills can fill in native browser API for something compatible with older browsers

- Safari is behind, doesn’t support service workers and is difficult with app manifests – need to specify app attributes in head or use Polyfill

David Lockie – Using Open Source Software to Speed up your Roadmap

Talk Summary

David’s talk took a top level view of businesses and the digital sprawl that can result from inefficient data flows between different platforms. To remedy this, David spoke passionately about the benefits of utilising open source technology, which removes the risk of getting locked into expensive technologies and instead enables businesses to run lean, fast and agile programmes.

How do you bring your Experiences, Data & Architecture together? A system that does it all, or should you build your own? #BrightonSEO pic.twitter.com/kab8RzqOGN

— Pragmatic (@pragmaticweb) September 15, 2017

Key Takeaways

- Digital sprawl – Problems can arise with data flow with from different systems and platforms (CMS, social CRM, financial etc.)

- Either buy Adobe (black box and hope it does everything) or build your own.

- Using open source is a good option and where Pragmatic are seeing digital battles being won.

- Using open source as glue is just less risky.

- Allows optimal pace of change at different layers.

- Avoids license lock-in.

- Reduces experimentation cost.

- Gives you options to avoid time lost waiting for feature releases.

- De-risks your platform by allowing visibility of the roadmap.

- Generally increased availability of suppliers.

- Encourages digital capability within your business.

- Companies are becoming more digitally mature, with more digital level C-people. Understanding value of good data strategy.

Stacey MacNaught – Tactical, Practical Keyword Research for Today’s SEO Campaigns

Talk Summary

Stacey kicked off the mid-morning session on the main stage speaking about the changing search landscape and how keyword research is still more essential and intensive than ever. The presentation then moved on to Stacey’s tools of choice and walked us through some key processes to be used as part of a successful keyword strategy.

Key Takeaways

- The search landscape is changing – 50% searches will be voice by 2020, home assistants are increasingly common and a lot of searches now begin on Amazon.

- Getting solutions to problem searches still isn’t great, but Google is getting better.

- Think beyond driving immediate organic sales – gain awareness for potentially interested customers.

- Identify triggers for your product or services and work out how people will search for that and their experience of that.

Use Answer the Public and Buzzsumo Question Analyser to get triggers. Other recommended tools are Kwfinder, DeepCrawl and Google Search Console.

Set realistic expectations – not all searchers are going to buy at the bottom of the funnel.

- Check out the best reviews for market leading products. Download it and put into a word cloud. What makes someone give a 5 star review? What adjectives are they using? Experiment with these adjectives in titles and Adwords ads.

- Stats are great because people will quote and can gain links.

- Accuranker is the best KW trackings tool and assisted conversions in Google Analytics is useful in attributing success of landing pages.

- Revisit KW strategy regularly and constantly look for new opportunities.

Tactical, practical keyword research for today’s SEO campaigns by @staceycav @brightonseo #BrightonSEO #katjasays #sketchnotes pic.twitter.com/05NGQsFrS5

— Ekaterina Budnikov (@KatjaBudnikov) September 15, 2017

Sophie Coley – Answering The Public: How To Find Top-Notch Audience Insight in Search Data and How To Apply It

Talk Summary

Sophie’s insightful talk first focused on the beginnings of Answer the Public and their quest to make a visually appealing keyword research tool. The talk then turned to practical ways you can us Answer the Public to can uncover contextual, behavioural, attitudinal and motivational audience insight in your online and offline marketing activities.

Key Takeaways

Answer the Public – founded to make KW research more visually appealing and convenient rather than typing out the alphabet to see what people are searching for.

Identifying and understanding your public

- Identify audiences – Look at “for” branch of wheel in Answer the Public to understand what users want.

- Use labels to further understand your audience and their lives e.g. readers and bookworms, beards and hipsters.

- Identify influencers by using ”like” branch e.g. coats like Kim Kardashian.

Benchmarking your brand

- Enter every possibility for how people are searching around your brand e.g. misspellings.

- Don’t default to content, take a step back and what is the best way to deal with this search behaviour?

- Look at competition and what people are searching for around that.

Recommended tools: hitwise, brandwatch, what users do

Answering the Public: How to find audience insight in search data by @ColeyBird @brightonseo #BrightonSEO #katjasays #sketchnotes pic.twitter.com/K71eD8mR6v

— Ekaterina Budnikov (@KatjaBudnikov) September 15, 2017

Kelvin Newman – Scary SERPs (and keyword creep)

Talk Summary

Kelvin finished off the morning’s talks in Auditorium 1 by highlighting some hilarious (and sometimes worrying) examples of Google’s ability to provide misinformation as it moves to the “one true answer” with voice search. Kelvin then moved on to provide some methods by which you can prioritise key phrases you want to rank and ensure you’re covering any gaps in your keyword strategy.

Key Takeaways

There are a number of instances of poor sources and incorrect information being in Search.

Even worse with voice search; as it only gives one “true” answer.

Voice search is a move towards “one true answer” – a risk for Google.

There is now less precision in keyword research e.g. Adwords keyword estimates are vaguer.

Query rewriting – what your searching for isn’t necessarily what Google searches for.

It would seem machine learning is involved in query rewriting. It tries to predict what you’re looking for, which isn’t necessarily what you search for.

Machine learning is about making predictions.

- Basically, Google is trying to see the connections beyond the surface that aren’t immediately obvious.

- Algorithms can help understand these.

- Keyword optimisation should include phrases that search engines would expect to see around the topic you want to rank for.

Method 1

- Take top 10 results for your query, extract using textise.net and put into a word cloud.

- Provides visual prioritisation of relative importance of key phrases.

Are you covering those areas?

- Use as a game of common words bingo. Are there any gaps that can be filled in the content on your site.

Method 2

- Take top 10 results for your query again, extract using textise.net and put it into another word cloud.

- Create a list of all the single words used on the page using Write Words.

- Create a venn diagram of the overlap to get a sense of the importance of the words that others are using.

Fili Wiese – Linkbuilding 2018

Talk Summary

Kicking off the afternoon session in a packed Auditorium 2, Fili spoke about the need to build links which drive conversions and brand awareness. As an ex-Googler and a DeepCrawl CAB member, Fili shared a number of highly valuable tips and tricks to inform and benefit your link building strategy for the long term.

Key Takeaways

Google is fine with link building for discovery, but not for the sole purpose of influencing rankings or PageRank.

- Take care of liabilities – don’t want penalties.

- Update the disavow file and manage your link profile.

- Link building is necessary, but we have to forget about PageRank and other third party versions of it.

What should we focus on instead?

- What we’re aiming at is for links that build conversions and brand awareness (not traffic or higher SERPs).

- Good links need to drive traffic and conversions.

How do we build those links?

- Use rel nofollow and whenever possible and when in doubt.

- Set parameters within GSC.

- Always be linking but know your audience and where they are on the web.

There might be some cases where you rank low on the first page but users knows your brand so they click on your result. - Focus on long term growth and strategy.

- Clean up your backlink profile and stop wasting money on links.

Dixon Jones – Creating Workflows for Link Building

Do links still matter? How many links are enough? Can a page rank with no backlinks? These were just a few of the questions Dixon answered in his talk which also covered his recommended workflows for a sustainable approach to link building.

Talk Summary

Do links still matter?

- Eric Enge – Links are the most important ranking factor.

- Google engineer Andrei Lipatsev said in October 2016 that content and links are the top two ranking factors.

- Gary Illyes said in a tweet – it is impossible to tell what links are critical, you can get the data but can’t know for sure.

How many links are enough? It’s not that simple.

- Links have wildly different strengths.

- Internal links affect the strength of a website.

- Many levels of complexity but we CAN estimate page strength.

- No correlation between ranking of the page and # of links pointing to that page.

- There is no such thing as domain level PageRank, all done at the page level.

- It is possible to rank a page with no external links.

Workflow for building links:

Identify the right influencers

- This can be difficult for agencies to do (use BuzzSumo for this)

Find sites linking to more than one site ranking for the term you want to rank for. - Use Clique hunter, LinkedIn is great, email is good, Facebook is ok.

Engage with influencers

- This is the hardest part as it’s about networking and communication. Favourite tool is beer. Be honest and sincere.

- Other tools include: Infusionsoft (Hubspot CRM as alternative), Sendible, email, Rapportive for Gmail, Sprout Social.

Define the digital asset

- The best idea is a unique idea, product or process. Create the market you want to move into.

Prepare the launch

- Prepare announcement and send influencers a preview so they have information before the general public.

- Connectors get buzz out of being first to talk about something new. Apple does this well. But also remind them when it’s live.

Greg Gifford – Righteous Tips for Building Totally Excellent Local Links

Talk Summary

Greg’s whirlwind of a talk focused on all aspects of building excellent local links and featured more 80’s film references than you can shake a stick at. Greg shared a comprehensive range of strategies you can use to build local links as well as explaining why building local links is completely different compared to the usual recommended tactics.

Key Takeaways

Local search ranking study 2017 – inbound links are the most important ranking signal.

Links are like votes to your site – more votes, more popularity.

Most link builders go after high DA links. Local SEO is different. Don’t care about DA, follow/nofollow. Care about links for traffic.

We’re usually talking about handful of links or <100 links for local link building.

Google weights inbound links based on authority, relevance and trustworthiness.

Think about what value your business can provide for other businesses.

Focus on long term real world value – form meaningful partnerships.

Local links are hard to reverse engineer. Leverage links based on relationships and partnerships.

5 ways to build local links:

- Buy sponsorships

- Donate/volunteer

- Get involved in local community

- Share truly useful information

- Be creative or get random mentions

- Take advantage of existing relationships

Even more ways you can build local links:

Local link ideas, Local meetups – offer space, sponsor, Local directories – not poor directories, useful ones, Local review sites, Event sponsorships, Local resource pages, Local blogs, Local newspapers, Local charities, Local clubs/organisations, Local calendar pages, Local interviews – can do outreach, Local business associations, Local food banks, Local shelters, Local schools, Ethnic business directories, Local art festivals, Local guides, Homeowners associations and Neighbourhood Watch sites.

Local link building process:

- Pull your links from tool of choice.

- Pull competitor links.

- Identify opportunities – pull links from similar businesses in other cities.

- Create spreadsheet of all opportunities, present list to client and ask what to go after.

- Rinse and repeat every quarter – might only get 1 or 2 out of 30-odd opportunities.

- Don’t mention links right off the bat, as that might not be SEO savvy.

- Focus on the value you can provide for the target sites user experience.

Jes Scholz – Marketing in 360 degrees: Photos, Videos and VR

Talk Summary

Jes’ forward thinking talk looked at the growing importance of VR and the core reasons behind why this technology is set to see phenomenal growth over the next couple of years. Jes presented two exceptional case studies of brands that have used VR to immerse and influence their audiences, as well as reporting on the excellent engagement seen by 360 content on social channels.

Marketing in 360 degrees by @jes_scholz #BrightonSEO #katjasays #sketchnotes pic.twitter.com/pHf8Lkbtv2

— Ekaterina Budnikov (@KatjaBudnikov) September 15, 2017

Key Takeaways

VR is a new communication layer of marketing. Website as primary contact point is coming to an end.

VR is immersive

- Makes you feel as if you’re part of the virtual world.

- VR has received a large amount of investment.

- VR traffic is going to increase 61-fold by 2020.

NY Times case study – How do you get people to care about 30 million people displaced by war? Send out 1.3 million Google cardboard devices and immerse your audience in an emotional story.

Merrell Virtual Hike – Move from product testing to product experience. Oculus Rift VR experience. Inspired people to go hiking.

Virtual experience can influence consumer behavior and inspire new desires.

These two use cases are exceptions, not the norm.

81% will tell their friends about a virtual experience = instant social impact

Need to know in advance what you want your audience to think and feel during and after the experience.

Key points about VR experiences are accessibility, scalability and lifespan.

- Treat VR as a channel and a commitment, not a campaign. However, technology is not quite there yet.

360 photos and videos are here.

- Can get beyond the screen with snapchat, YT, Facebook.

- Low cost investment with increased engagement. Photo received 3x more like, 6x comments and 9x more shares.

- Content is more critical than format. 360 must enhance the message. 360 won’t always increase engagement.

- 360 content offers new experiences and fosters brand affinity.

Rebecca Brown – Why you Should Scrap your “Content” Budget Line

Talk Summary

Rebecca’s persuasive talk centred on scrapping your content budget and see content marketing as a way of enhancing existing channels. Rebecca then outlined the process Builtvisible as part of their content strategy ensuring it is specific, realistic and profitable.

Key Takeaways

Are we paying too much or too little for content making and is it converting the way we want it to?

- Many metrics for measuring content performance and we’re picking and choosing what to measure based on what looks best for individual pieces.

- Content should be concerned with bettering existing channels not having it’s own budget.

- A common approach is to build a keyword list, expand, categorise and prioritise. Problem is that you get volume when what you need is opportunity, which is specific, realistic and profitable.

The process Builtvisible use:

- Start with foundational research – search behaviour, user intent, customer journey.

- SERP analysis – What is ranking? Why are things ranking?

- Look at old content that is ranking and collaborate with them to update it and work into your brand.

- Think about how you can gain visibility in aggregator sites which you can see as other search engines.

Effort and tactics

- What is the potential gain?

- What are the proposed tactics?

- How much time and effort is required?

Budgeting

Define the size of the opportunity, define the user intent, align this with the most relevant tactic and then allocate spend within your channel.

Why you should scrap your content budget line by @RebeccaGraceB #BrightonSEO #katjasays #sketchnotes pic.twitter.com/W1j9yWzJfc

— Ekaterina Budnikov (@KatjaBudnikov) September 15, 2017

Jon Myers – Links, Log Files, GSC and everything in between

Talk Summary

Jon’s informative talk focused on the story of one URL and the power of adding links, Search Console, Analytics and log file data to crawls to better understand each page on your site and it’s indexability. Jon introduced the concept of your search universe where these internal and external data sources are brought together to inform technical SEO initiatives.

Key Takeaways

Adding external and internal data sources to crawl data is a powerful way of gaining insights about your search universe.

Things to consider when combining backlinks with crawl data:

- Backlinks Authority distribution?

- Where are the backlinks landing on?

- Positive/Negative Backlinks?

- Backlinks to low DeepRank/high level pages?

- Backlinks to orphaned pages?

- Backlinks to broken pages/non-indexable pages?

Log files

Incorporating log file data into a crawl reveals where crawl budget is being wasted.

Identify non-indexable pages receiving bot requests:

- Hits to redirect chains

- Hits to 404/410 status codes

- Hits to soft 404 or orphaned pages

- Hits to 3xx status codes

Find out how frequently search engine bots hit your site.

For pages receiving low bot requests you could consider:

- Adding more internal links to that page

- Adding a “Last Modified” date to the sitemap

- Making sure no non-indexable pages are included in the sitemap

- Splitting out the sitemap into smaller ones, including one sitemap for new pages

Understand How Bots Crawl Your Site With Mobile and Desktop User Agents.

Split out hits from a desktop bot and the mobile bot, so you can understand how a search engine is using crawl budget to understand your site’s mobile setup.

- Responsive sites:

Search engine uses both user-agents on the same URL to validate that the same content is returned. - Dynamic sites:

Search engines need to crawl with both user agents to validate the mobile version. - Separate mobile sites:

Search engines need to crawl the dedicated mobile URLs with a mobile user agent to validate the pages and confirm the content matches the desktop pages.

What can GSC add?

- Find poor experience pages with traffic.

- Find pages with image search traffic, with broken images – a well known retailer makes 10% of revenue from image search alone.

Adding traffic to the equation with Google Analytics.

- Need to add consumer power into the mix.

- Where do your customers interact with your site and what do they convert on?

- Google drives awareness but people drive ROI!

Need ability to have holistic overview of what is happening on a page combining log files, GSC, GA and backlinks.

Need tool in place that can scale up for a large site.

Automation is needed.

Your search universe will be different from others and you need to have a deep understanding of it.

Links, log files, GSC and everything in between by @JonDMyers #BrightonSEO #katjasays #sketchnotes pic.twitter.com/zyX4hXgV7P

— Ekaterina Budnikov (@KatjaBudnikov) September 15, 2017